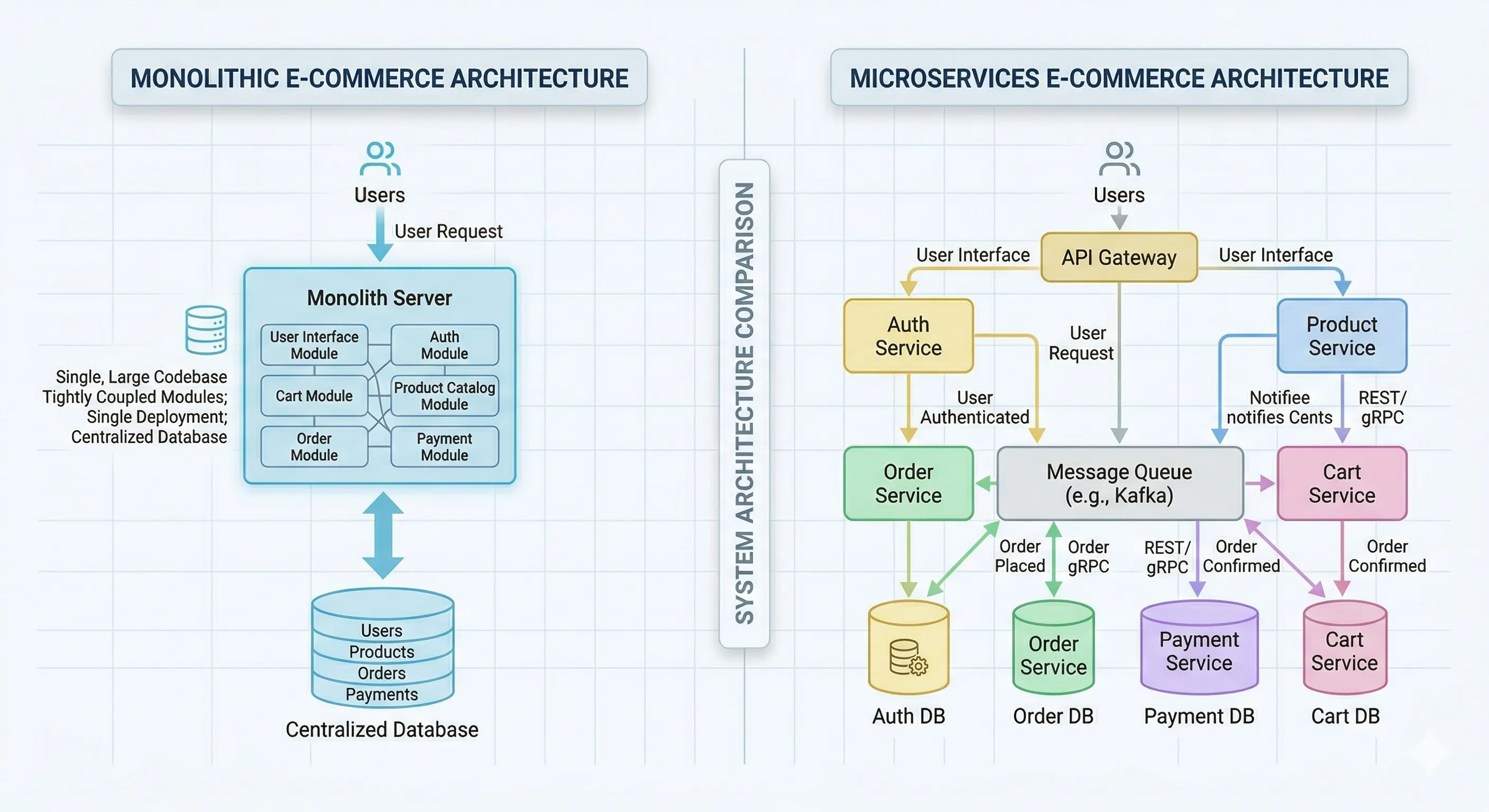

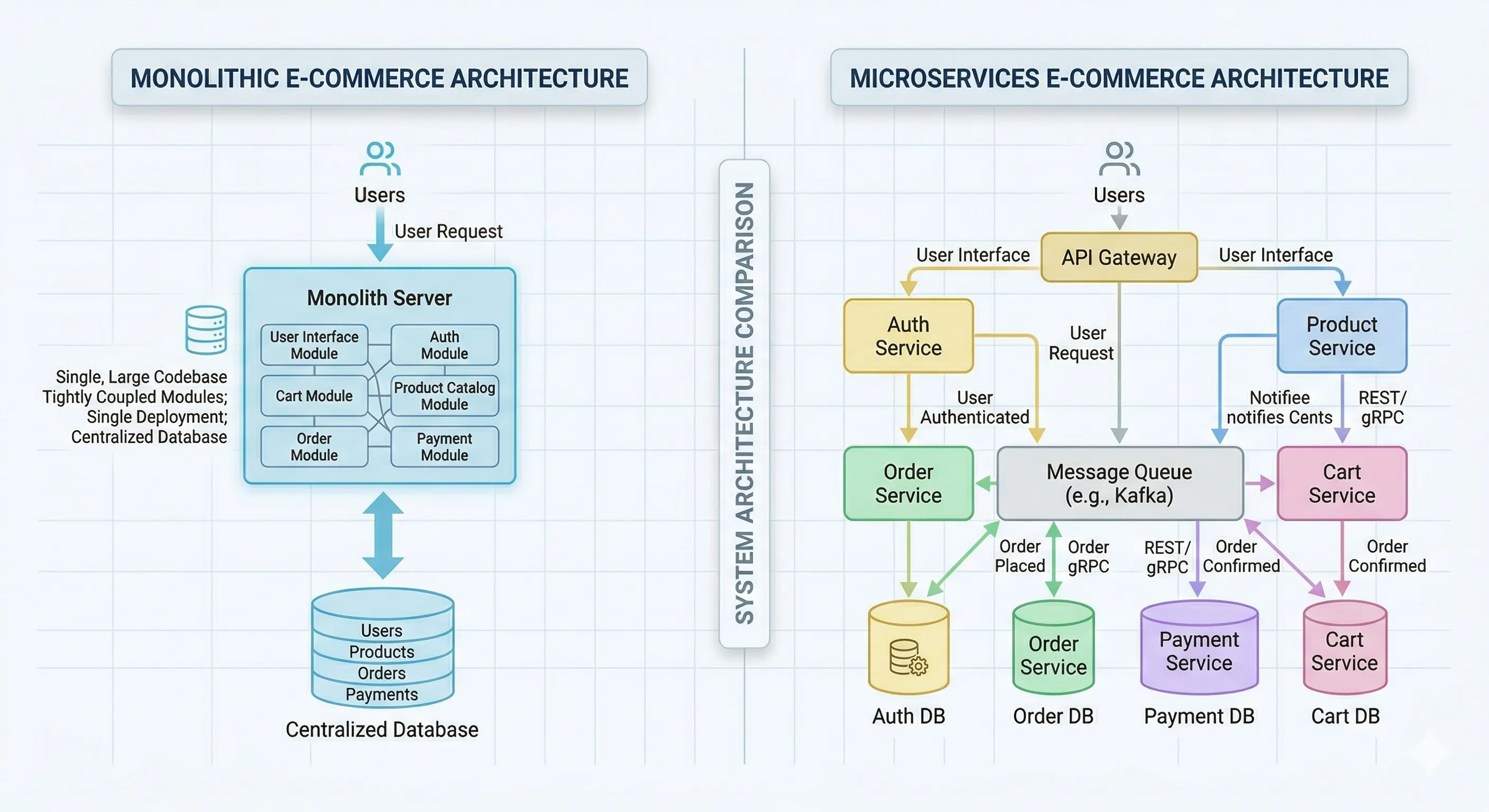

Monolith vs Microservices

Compare monolith and microservices for e-commerce backend design, including scaling, reliability, failure handling, patterns, trade-offs, and practical adoption.

Welcome to my digital notebook. Here, I break down complex technical challenges into simple guides, sharing what I learn while building modern apps and cloud systems.

Compare monolith and microservices for e-commerce backend design, including scaling, reliability, failure handling, patterns, trade-offs, and practical adoption.

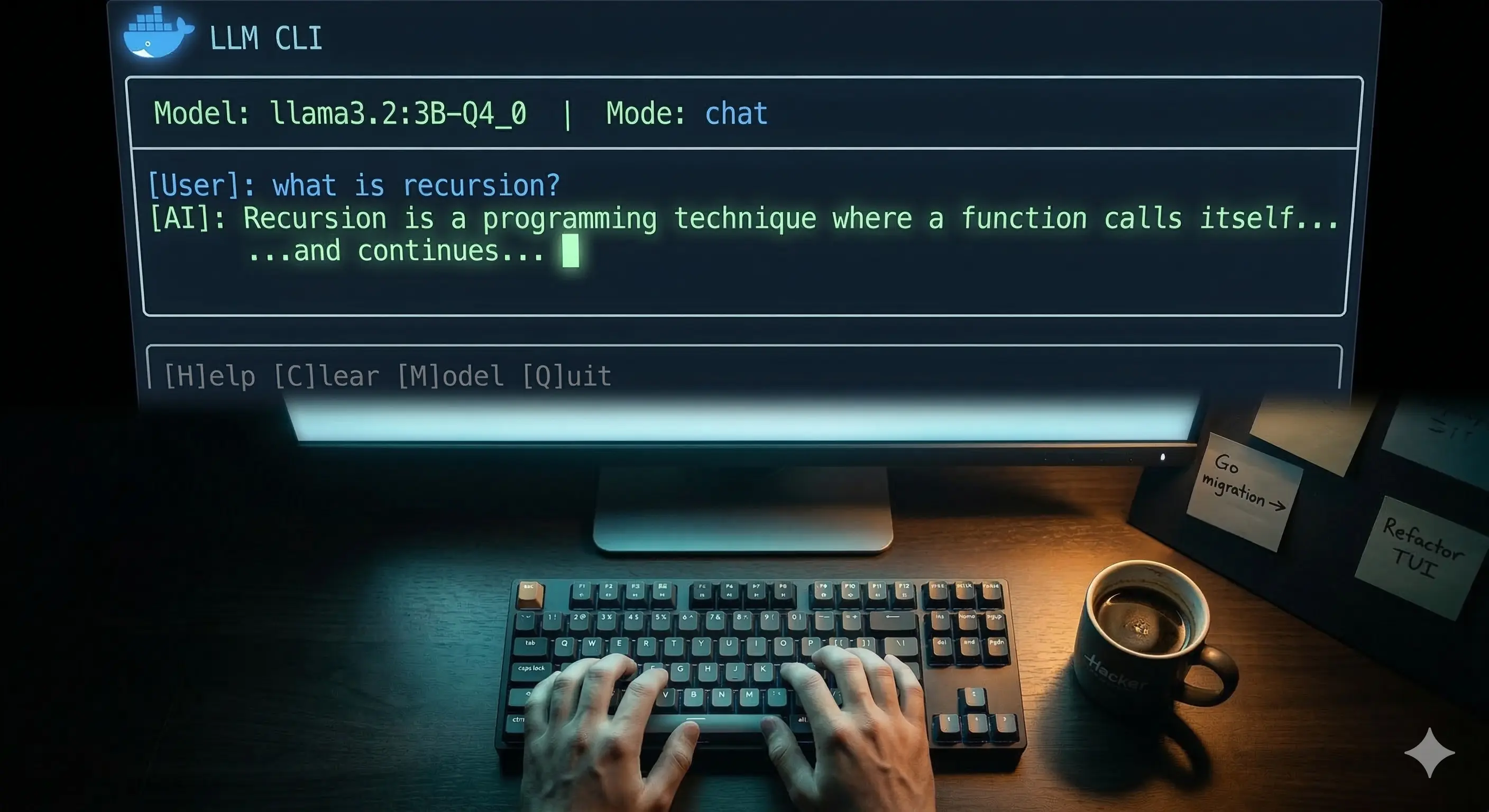

In the final part of this series, I build a complete terminal AI chat app in TypeScript using Docker Model Runner. One binary called llm that anyone can run — streaming responses, conversation memory, preset modes, auto model detection, and history saved to disk. I walk through the full architecture, every design decision, and what I learned. Plus — why I'm migrating this to Go next.

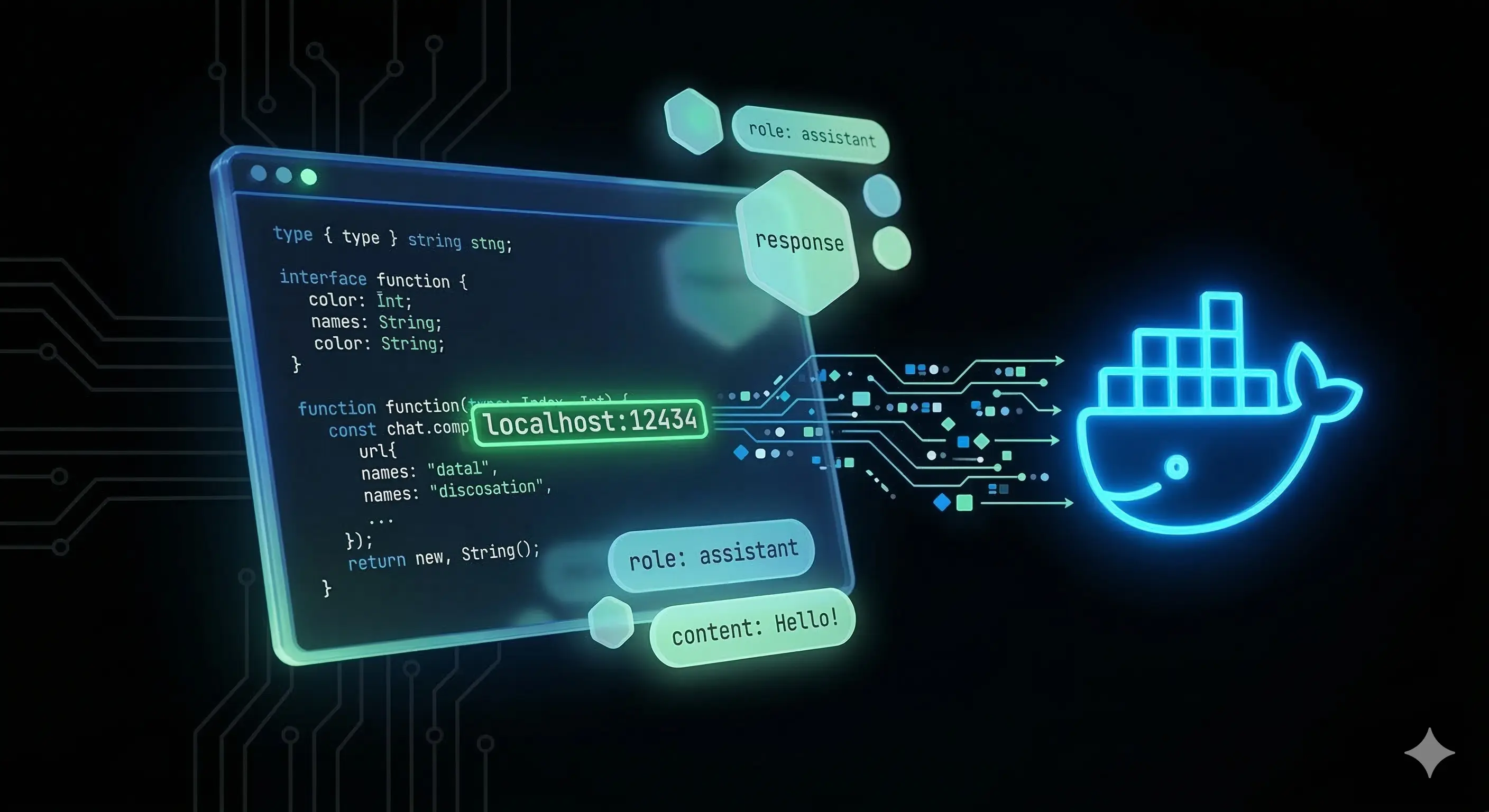

In Part 1 we set up Docker Model Runner. Now we actually use it. In this post I walk through calling your local LLM programmatically using TypeScript and the OpenAI SDK — no API key, no cloud. We build three scripts from scratch: a single question chat, a streaming response that feels like ChatGPT, and a multi-turn conversation with memory. Plus — DMR's native endpoints for managing models directly from your code.

As a CS student, I wanted to experiment with LLMs without paying for API access or sending my data to the cloud. Turns out, if you already have Docker installed, you're 90% there. This is Part 1 of a series where I set up Docker Model Runner, explain the GenAI vocabulary that confuses everyone (parameters, quantization, context window), and get a 3B model running locally on my laptop — for free.

Stop choosing between Full-Stack convenience and API flexibility. Learn how to use Next.js as a high-performance proxy for your Express server to achieve elite SEO, secure JWT handshakes, and 100% Lighthouse scores.